Automating software tests is an essential practice for setting up an Agile development process. In particular, the non-regression tests must be done in an automated way to free up time for the validation teams to automate new tests on the latest functionalities, or even to carry out exploratory testing.

Since we are talking about automation, let's tackle a fundamental issue: the variability of tests using a data file. Welcome to what is called data-driven testing in software development.

What is data-driven testing ?

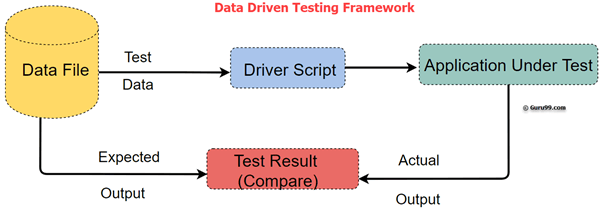

Data-driven testing (or DDT) is a software testing methodology that uses an array of conditions to populate the test's input data, as well as its expected output data, and its environment and control variables.

The most simple way to set up DDT is to create a table in CSV format and assign one or more columns to input parameters and output data. Each row will represent a data set for an iteration of the test. This is precisely what we are going to do. It is also called table-driven testing or parameterized testing.

Data Driven Testing is strategic because testers frequently have multiple data sets for a single test and creating individual tests for each data set can be time-consuming and really challenging. Data driven testing helps separate data from test scripts and the same test scripts can be executed for different combinations of input test data. This way, test results can be generated efficiently.

Data-driven testing with Agilitest

Create a project with a main ATS script

To get started, you need to create a new project or open an existing project.

We are going to create a main ATS script that will be used to hook the DDT dataset to the actions of the test. This ATS script will contain a Sub-script line which is configured with two basic data: the name of the script to open and the path to the CSV file.

Create a CSV (or JSON) data file

You must therefore create a CSV file with the New component button. The names of these files must match the names called in the main ATS script. To do this, simply drag and drop the CSV file into the CSV file path box and the path will be relative to the root of the project, for example: assets:///data/data.csv

The CSV file is created and edited in Agilitest. Then you can edit this file with a classic text editor as long as you respect its syntax (CSV encoded in UTF-8 with comma field separator, double-quotes framing character, and CRLF line breaks). The file can also be generated by an external routine.

Agilitest also allows you to use a remote CSV file by entering a URL address instead of assets:///data/data.csv. The CSV file can then be generated dynamically by a small web application, or simply remain static remotely.

The fields of your CSV files can contain texts to be entered on the keyboard, text to be recognized on the application, or even attributes. to recognize on the page (CSS classes or id, desktop attributes, etc.). Finally, they can contain values to compare with fields in your application.

Create a secondary ATS script

To create the secondary ATS file you can right click on the subscripts folder and click on New ATS script.

The secondary ATS file will contain all the desired actions from opening the channel to closing the channel. It is a good practice to close the channel at the end of the script in order to be able to variabilise the channels (chrome, firefox, etc) if needed.

Another method leading to the creation of the secondary script is to create all the actions in one script, then select the ATS actions to be isolated in a secondary ATS script, and drag and drop them into the subscripts folder. This allows the creation of sub-scripts in a natural way, and thus access to the possibility of simply variabilising actions.

Exploiting the test data

The data in your CSV files can be of different kinds:

- text to be typed in a given target

- text to be recognized in <tag>content of an HTML tag</tag>

- value of an HTML attribute of type class, id, or other

- text to be compared with an Agilitest variable captured with the Property action

- text to be compared with the Check > Values

- name of a variable to be retrieved and which contains the searched value

What types of data-driven tests will I be able to perform?

Data-driven testing with Agilitest consists of executing a sub-script fed by a CSV data file where each column is a parameter and each row corresponds to a test iteration.

Here are some examples:

- Perform tests on the access control of an application based on user roles. In this case you can manage a CSV file including the login data and the privileges to be controlled. To do this simply, a master script will call several successive sub-scripts: login, then a sub-script for each type of control, and logout, before moving on to the next user.

- Performing tests on the transitions of an object subject to a workflow or a state graph: here too, it is very simple to check which transitions are possible to move from one state to another. This obviously requires the ability to generate successive objects with a given state, but it is quite simple with a sub-script.

- Give depth to your functional tests: on the basis of a functional test previously carried out, you can transform it into a sub-script and variabilise it in order to call it with a CSV data file. This procedure will then allow you to regularly check that you do not have any data-related bugs: these are generally the ones that are reported by your customers, even if you have carried out very complete functional tests of your application.

These are just three examples among many others. Agilitest allows you to go very far in your testing process to cover a maximum number of cases.

.png)